Calibration Matters More Than Automation: What AI’s History Suggests About Building Agentic Research Systems

by George Jensen

Subscribe to get sharp thinking all about ResearchOps delivered straight to your email inbox. It’s free!

The ResearchOps Review is supported by User Interviews, now part of UserTesting. User Interviews makes it fast, easy, and affordable to recruit participants so you can scale research without sacrificing quality.

Whenever I hear someone use the term “AI,” I stop and ask them which type of AI they mean. “AI” could mean a surprisingly large range of things. It could mean large language model (LLM) prompting, agentic multi-step reasoning, image generation, classical machine learning, recommendation systems, predictive analytics, computer vision, or natural language processing in its older, pre-LLM forms. But they’re not one and the same thing. Unless you know precisely how the system is configured, or how to configure the system, AI can have vastly different capabilities, confidence calibrations, error profiles, readiness levels, and governance requirements.1

In distributed organizations, where knowledge and terminology are naturally fragmented across silos, establishing a shared glossary of AI classifications is one way to ensure aligned discussions and informed decision-making about AI. If my organization already has a glossary of terms and acronyms, I usually request that these classifications be added to it. If no glossary exists yet, I’m usually the first to make one. But making the right decisions about AI often requires more than a glossary to disambiguate terms.

To build successful AI-enabled research systems, you’ll need to understand the difference between probabilistic and deterministic AI systems, why shoehorning the former into the latter won’t work, and why aiming to “de-weird” AI isn’t the right strategy. Ironically, to better understand these themes, the best place to start is in the 1950s.

A Story of Two AI Models: Deterministic and Probabilistic

The term “artificial intelligence” was first coined by the American computer and cognitive scientist John McCarthy in the late 1950s, primarily to brand a funding proposal for the Dartmouth Conference, widely considered the founding event of artificial intelligence as a field. McCarthy’s work produced what’s called symbolic AI: an approach that uses symbols, rules, and logic to enable reasoning and is entirely dependent on explicit human programming: a deterministic model, unlike today’s deep-thinking AI, which relies on a probabilistic model.

Though McCarthy named the field, the AI model he developed largely sits outside the deep-thinking AI that’s currently reshaping the world as we know it. So, who does the current AI evolution belong to? For me, this history sits close to home, and it’s a key story to understanding the two models that drive AI and how to leverage AI to build research systems.

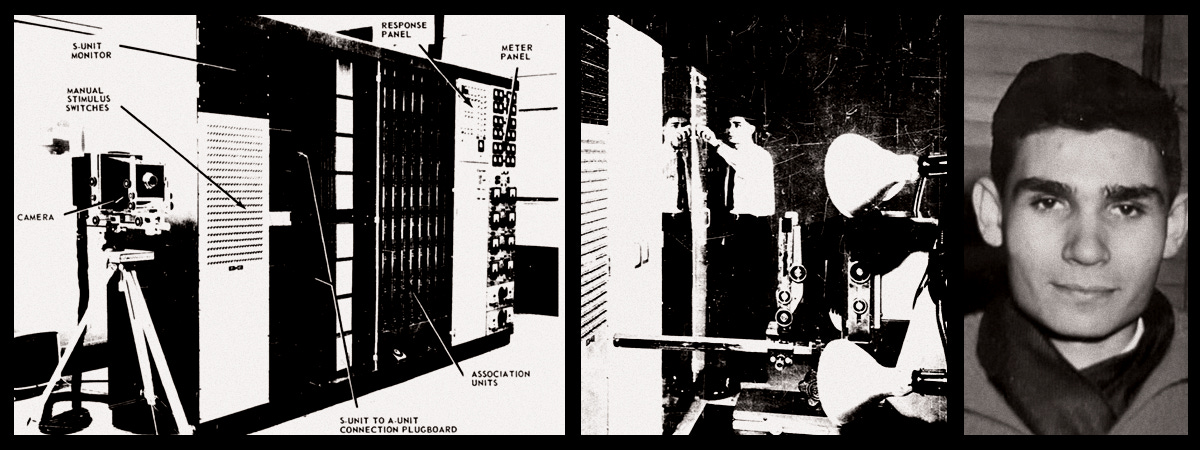

Circa 1959, my uncle Thomas Osborn (see Figure 1), a student at Cornell University, was hired by an American psychologist called Frank Rosenblatt, the father of machine learning. His job was to assemble, test, and run experiments on a large device called the Mark I Perceptron; recognized as the first artificial neural network device. The Perceptron was equipped with a grid of four hundred electronic “eyes” and processed crude snapshots of the letters of the alphabet. Instead of relying on a human to program the explicit definition of the alphabet, it physically adjusted its own internal paths until it taught itself how to recognize the letters. Where McCarthy’s “Dartmouth” system relied on deterministic rules written by humans, Rosenblatt’s Perceptron learned patterns from random examples in the machine, making it difficult to pinpoint how it worked based on rigid rules. (If you’re currently experimenting with AI, that may sound frustratingly familiar.)

This reliance on probabilistic randomness—given the same input, the system doesn’t always produce the same output—rather than deterministic rules—given the same input, the system always produces the same output—laid the groundwork for today’s deep-thinking AI. It’s precisely why modern agentic tools are good at detecting patterns in data, including research data. Today, if you work in research and know what you’re doing, it’s possible to apply similar probabilistic approaches to qualitative transcript analysis using custom agentic workflows to detect semantic patterns across hundreds of unstructured, in-depth interview transcripts.

Understanding the distinction between deterministic rules and probabilistic patterns is what matters most for understanding today’s AI landscape, and, as researchers and architects of research systems, it’s important information for how you should, and should not, use AI to automate and augment the research process—because trying to generate certainty from probabilistic deep-thinking AI, or trying to “de-weird” it, is literally a case of fighting the machine.

A Profound Strategic Mistake: Treating AI Like Ordinary Software

In a recent The Economist article (paywalled), Wharton professor, AI expert, and author Ethan Mollick wrote: “...the dominant instinct across the corporate world is to treat artificial intelligence as if it were just another piece of enterprise software....This is a profound strategic mistake. Companies are racing to de-weird AI, and in doing so they are squandering what makes it transformative, turning it into just the latest wave of office automation.” This specific failure is heavily reflected in current market data. Despite Gartner forecasting a massive 40 percent surge in agentic applications by 2026, they also predict that 40 percent of those projects will be cancelled by the end of 2027. Concurrently, in “Rise of agentic AI: How trust is the key to human-AI collaboration,” the Capgemini Research Institute reports that a mere 2 percent of enterprises have successfully scaled agentic workflows.

Particularly as a research systems architect, as you seek to extract efficiencies from AI, it helps to avoid de-weirding it. AI might make parts of the research workflow easier and faster—even instant—but the greater transformation lies in using AI to assess, provoke, and augment human ways of thinking: to turn it into a sparring partner rather than an oracle.

In a very human way, American academic and podcaster Brené Brown and popular science author Adam Grant exemplify this kind of friction. They’ve spent years acting as public sparring partners. By deliberately exposing their different methodologies and pitting themselves against one another, they prove the value of intentional conflict—or antithesis in synthesis. As Brown wrote in her recent book, Strong Ground: “The difficult and disciplined commitment of rethinking and questioning what we know is where Adam’s love of quantitative cognitive science and organizational psychology crashes into my love of the deeply human, and often qualitatively understood, issues of emotions, courage, and vulnerability.”2 For researchers and research systems designers, this is both the conundrum and the opportunity: How should AI be used to enable efficiency without missing the primary purpose of qualitative research to discover “issues of emotions, courage, and vulnerability”?

This is exactly why qualitative analysis is the perfect domain for using probabilistic AI.3 Where quantitative research aims to measure predictable outcomes by flattening human thinking and emotion into simple, easy-to-digest dashboards, qualitative research forces active listening and requires a level of empathy that rigid logic can’t replace. Research professionals need a tool—AI and otherwise—that can actively engage with this messy, weird, ambiguous (probabilistic) qualitative data; a good example of which is research transcript data.

Calibration as a Standard of Practice

Understanding how to actually spar with a machine requires understanding how it’s built. Because my sons are developers working in agentics, I was exposed to this technology early and quickly got up to speed with the technical stack sitting under the hood. I wanted to see if the machine could act as a calibrator rather than an automator. In one experiment, I configured individual agents to analyze a set of research interview transcripts, then presented the output to a senior researcher who had already manually analyzed the same data. After comparing the machine’s work alongside their own, they noticed several artifacts they had missed, as well as several the agent had missed. For example, the researcher had logged irrelevant “psychological scene setting” instead of the actual decision triggers, while the agent had failed to flag when an interviewer asked a leading question, thereby disqualifying the participant’s response. The researcher commented, “It was definitely useful because I can now see how humans and agents can get it wrong. Both need some tweaking.”

Automation bias, or the tendency to over-trust machine outputs because they appear formally structured, has become one of the critical risks in using generative AI today. LLMs are masters at mirroring. A well-formatted LLM summary typically mimics the vocabulary of analysis and looks grammatically clean, but as most researchers will agree, mimicking isn’t the definition of well-executed analysis. That’s not to say that deep thinking AI isn’t a useful partner in the analysis and synthesis process. If a researcher flips the automation bias dynamic on its head, refuses to trust the AI prompt, and instead uses it to test or calibrate their own research standards, the model will stop acting like an oracle and start acting like a research partner. Rather than an error-prone automator, AI becomes a vital structural critic, positioned to point out researchers’ natural human biases. In this case, per Mollick’s article, you’ll leverage rather than squander what makes AI so transformative.

In a recent LinkedIn post, researcher and AI trailblazer, Caitlin Sullivan, summarised this exact shift: “You don’t just build workflows. You systematically test them. You run the same analysis multiple times on the same data to check if findings are stable. You test across models. You know which skills produce consistent results and which ones drift when the data changes, and how to fix them.” In short, researchers move from conducting the analysis to both conducting the analysis and actively governing the analysis and synthesis workflow—or perhaps they’re lucky enough to have a ResearchOps professional in situ to design and maintain parts of that governance for them. Leveraging agentics for mutual calibration changes the role of research analysis entirely. Rather than dedicated analysts and synthesisers, researchers become rigorous testers of the machine’s contextual analysis, elevating their own critical thinking to manage vastly more data touchpoints.

As an organization scales its agentic practices, the core responsibility of ResearchOps must fundamentally shift from procuring isolated tools to architecting governed, systemic workflows. For a next-generation ResearchOps lead, this introduces two vital strategies. First, building agentic networks that orchestrate the broader research lifecycle, and second, training practitioners to use these models for deep analysis and to rigorously audit their outputs and report deviations. These frameworks must be designed to treat the AI for what it actually is: a “mirror” that continually recalibrates and elevates the organization toward greater human-centered rigor. Through this lens, the technology stops functioning as a basic summarization tool and instead operates as a systemic participant in the research pipeline.

Turning Qualitative Standards into Agentic Workflows

One of the weirdnesses of AI is that it’s not a tool that you evaluate against a set of requirements and then procure, configure, and onboard people into the way you would have a SaaS tool a year or two ago. Once it had been configured, the SaaS system was designed to behave consistently, but probabilistic AI isn’t. It changes how the tool and performance quality are assessed, how researchers and ResearchOps professionals work together, and the level of craft a ResearchOps professional must have to do their job well.

As a ResearchOps practitioner, I’ve recently deepened my understanding of qualitative analysis by completing two of Indi Young’s Data Science that Listens (DStL) courses, which rigorously explore the mechanics of concept summaries and emergent patterns. Mastering these specific qualitative research tools is critical for building AI-augmented research operations because they provide the exact cognitive frameworks required to translate chaotic human thought into structured, actionable data—data that’s aligned with the requirements of today’s probabilistic AI.

I specifically use Young’s standard as the baseline for evaluating the quality of the analysis and synthesis results produced by custom-built AI agents. This synthesis acts as the foundation for everything else I do, such as configuring new analytical skills for agentic pipelines, upskilling researchers to actively manage their own agentic models, managing and curating “agent libraries,” and troubleshooting agent handoffs and outputs to ensure they’re following compliance policies and that their tasks aren’t overlapping—all foundational line items that should be on every ResearchOps professional’s to-do list.

The goal isn’t only to enable individual researchers to use AI to deliver rigorous research—or the self-oriented activity of “I-Me-Mine AI,”4 as ResearchOps thought leader Kate Towsey recently called it. Scaling Young’s level of qualitative rigour across dozens or hundreds of practitioners requires shifting focus from delivering isolated tools or training to systemic governance of AI usage to support or augment countless research studies.

On a practical note, agentic ResearchOps revolves around using natural language to write specific analytical skills (step-by-step cognitive descriptions and rules that tell the model exactly how to parse subjective data), which are stored in centralized markdown (.md) files. These files act as strict, governed boundaries that configure the enterprise’s multi-agent workflows. Knowing how to tune these agents or skills might not be overtly technical, but it does require a deep methodological grounding, such as encoding Young’s DStL criteria for “emergent patterns.”

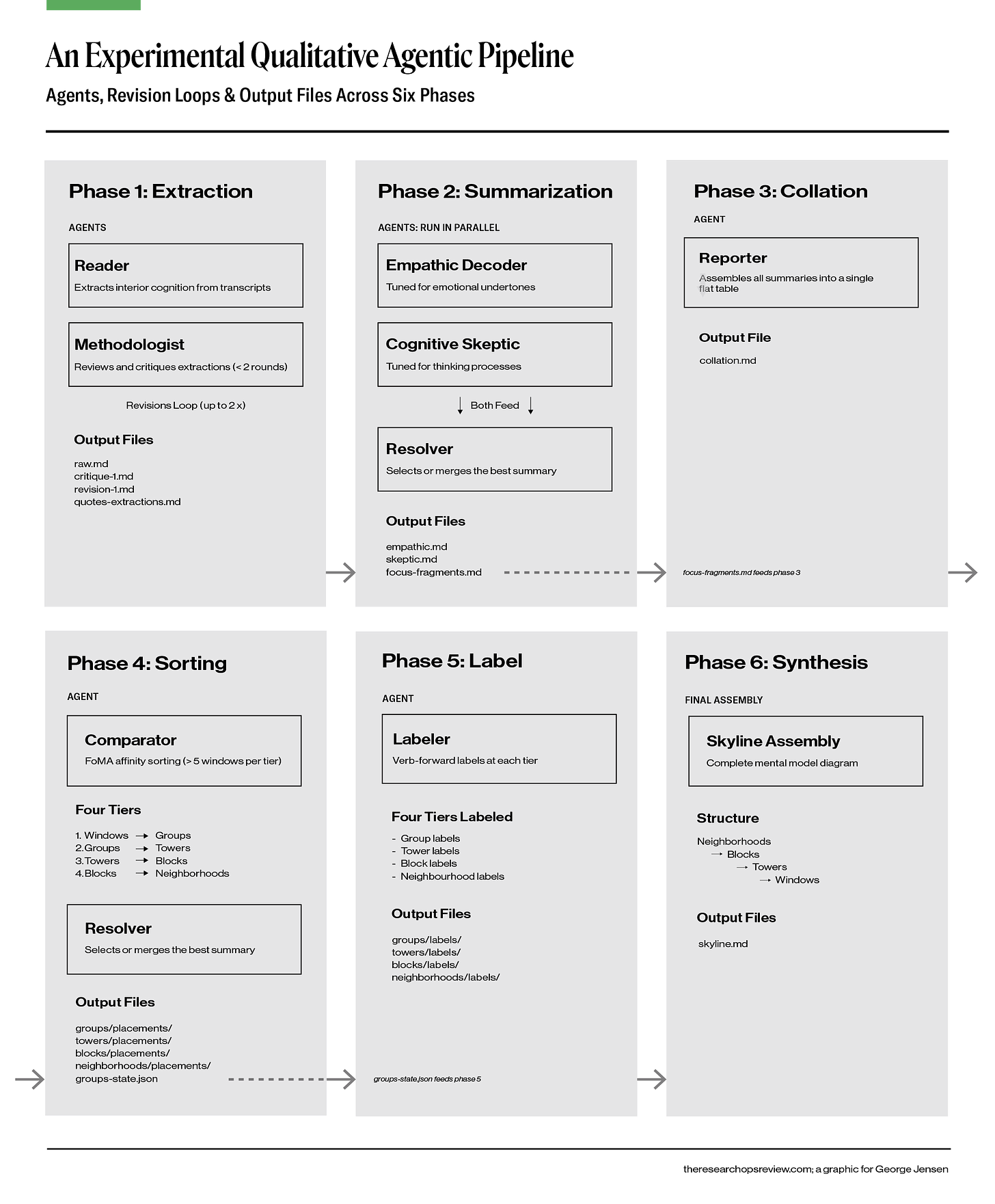

By standardizing these rules, ResearchOps leads can deploy specialized multi-agent networks to support the entire organization (see Figure 2). Instead of deploying a single, error-prone agent for researchers, you could architect specialized clusters or teams of agents, each with its own skillset, each working together to achieve a goal. For instance, you might configure one agent to scan transcripts strictly for empathic markers, a second for cognitive logic, and a third to observe and resolve friction among the agents before presenting the summary to the researcher.

For people who do ResearchOps—the emergence of AI means that, for many researchers, delivering research operations is now a primary part of their role, too—this is the ultimate operational frontier. ResearchOps professionals are literally codifying the organization’s methodological standards into the agentic architecture itself, guaranteeing systemic rigour across every project.

Why Repositories Are a Practical Starting Point

Beyond deep data analysis, research repositories represent the most immediately tractable “integration model” for AI from an operational standpoint. The foundational problem with most traditional repository platforms isn’t technical deployment; it’s that they completely lack the infrastructure required to actively manage a controlled vocabulary. Organizations inevitably suffer from a severe taxonomy problem when traditional metadata remains static while corporate vocabulary continuously evolves. As a result, because historic research is rarely findable or connectable, teams treat repositories like passive filing cabinets. Agentic workflows can solve this exact taxonomy problem by tracking researchers’ semantic searches. Say a product manager searches the repository for “chat interface,” but all the relevant historical research is hidden because it was tagged three years ago under the older term “conversational UI.” An agent can monitor internal keyword searches, identify conceptual gaps, and automatically update metadata across past studies to match the vocabulary the organization is naturally using today. By automating metadata generation based on real retrieval behaviour, the repository becomes a living system that continually refines its search taxonomy. This can be semi-automated, with a librarian reviewing it before the new tags are deployed.

As ontologist Jessica Talisman wrote in “The Ontology Pipeline™, Refresh,” a controlled vocabulary is the absolute foundation of architecture. The taxonomy in your file structures is literally the first layer of governance your agent encounters. If researchers use inconsistent tags, the agent simply inherits and compounds that ambiguity. By forcing a consistent, machine-readable map—deploying strict frontmatter fields for domains, themes, and specific thinking styles—the agent can securely route and filter at the metadata level, protecting the LLM usage budget and behaving strictly according to our defined rules.

This type of hybrid workflow illustrates Ethan Mollick’s earlier point: if we treat AI merely as an automation efficiency tool, we miss its true value. Here, the machine isn’t replacing the librarian; it is acting as an active sparring partner, introducing helpful friction by challenging the organization’s outdated vocabulary and forcing the human to actively mature the taxonomy. This also has important implications for operational budgets.

When I cloned copies of my son’s agentic setup and ran them locally on Opencode using a Gemini integration, I racked up a large bill for LLM tokens in just one month. Translated to scaled-up enterprise costs, I quickly realised that making agentic-driven research viable for large organizations requires more than just enabling effective LLM usage; it must also be cost-efficient. Based on my experience spanning both the tech and research sectors, scaling agentic AI is rarely a technical deployment challenge; it’s fundamentally a governance and contextual integration challenge.

Without a robust taxonomy intentionally built into file names and frontmatter (metadata, or the elements that introduce the reader to the body of a document), an agent crawling the repository has no explicit map; it is forced to open every single file and read its contents just to determine relevance. Every token the model is forced to read burns operational budget and rapidly consumes precious context window limits. Cost control isn’t just an IT concern; if ResearchOps professionals aren’t actively protecting operational budgets through disciplined taxonomy work, enterprise leadership will simply pull the plug. A mature system will know how to cache and reuse LLM outputs without burning expensive tokens on repeated prompts. And agents running on self-hosted open-source LLMs won’t solve this problem, because you simply inherit the same token ceiling on hardware you now have to maintain yourself.

From Adoption to Integration

Reading the history of agentic AI, particularly the long period between Rosenblatt’s Perceptron and the deep learning revival, dominated by OpenAI and Anthropic, is useful for research systems builders because it shows how long genuine foundational shifts take to stabilize. The lesson isn’t simply that things take time; it’s that without that historical framing of the journey, the cycle repeats: a capability arrives, expectations inflate, integration is rushed, the tools underdeliver against expectations, and the field either dismisses them or defaults to the next trend. The massive project cancellations predicted by Gartner and Capgemini are a direct symptom of this cycle. My opinion is that most of the AI capabilities being marketed to research organizations are in the adoption rather than the integration phase. That doesn’t mean that these capabilities should be ignored; it means they should be trialed with appropriate methodological controls rather than deployed as finished infrastructure.

Making agentics-driven research systems work for the large enterprise isn’t predominantly a technical challenge; it is a governance challenge. First, you must govern quality: the strict analytical and taxonomical boundaries and review loops that keep machine-assisted synthesis methodologically sound. Second, you must govern the financial implications: the information architecture that prevents token burn and keeps agentic workflows affordable at enterprise scale. Finally, you must govern the risk: the systemic accountability ensures that AI remains an introspective sparring partner, rather than an oracle permitted to shape decisions unchecked.

If we stop treating generative AI as an automated oracle, we can begin architecting the custom, well-governed workflows our research pipelines actually need. By using the technology as a mirror rather than a replacement, ResearchOps leads can continually recalibrate and elevate our systems toward a richer outcome of human-centred rigour. Research relies on our ability to listen, adapt, and evolve together. Let’s pave the way for a research practice as diverse as the world it seeks to understand.

The ResearchOps Review is made possible by User Interviews, now part of UserTesting. With a vast participant network, precise matching, and fraud prevention, User Interviews can reliably fill any research study. Source, screen, track and pay participants, then move seamlessly from data collection to deep analysis, all in one place. → Learn more about User Interviews for ResearchOps.

View this sample glossary exploring the common terms that encompass the range of meanings for 'AI'.

Brown, Brené. 2025. Strong Ground: The Lessons of Daring Leadership, the Tenacity of Paradox, and the Wisdom of the Human Spirit. Random House. https://brenebrown.com/book/strong-ground/.

Deterministic AI models are in use today. Examples include traditional search algorithms, industrial automation, rule-based expert systems, chess engines (without neural network components), and basic recommendation systems.

Towsey, K. (26, March 5). The Research Operating System Too Few Are Building: Why “I-Me-Mine AI” Isn't Enough. The ResearchOps Review. Retrieved April 23, 2026, from https://www.theresearchopsreview.com/p/a-wake-up-call-for-researchops

Very informative article George. I love the summary line "If we stop treating generative AI as an automated oracle, we can begin architecting the custom, well-governed workflows our research pipelines actually need."

Loved this piece, George. Great citation for a series I'm outlining on this topic 🙌