The Research Operating System Too Few Are Building: Why “I-Me-Mine AI” Isn't Enough

by Kate Towsey

Subscribe to get sharp thinking all about ResearchOps delivered straight to your email inbox. It’s free.

The ResearchOps Review is brought to you by Rally—scale research operations with Rally’s robust user research CRM, automated recruitment, and deep integrations into your existing research tech stack.

In 2023, Jakob Nielsen published a series of articles urging UX professionals to embrace AI urgently or risk professional obsolescence: “either you’re the windshield, or you’re the bug,” he wrote.1 That’s to say, if AI were a high-speed car, a windshield would master it; a bug would be squashed by it. “It’s not AI that will snatch your job,” he wrote, “but the individual leveraging AI to outpace your performance.” Three years later, Nielsen’s articles are remarkably prescient.

AI is fundamentally changing how research operates (to be accurate, how everything operates), and with it, the research capabilities and responsibilities of everyone in its orbit. Researchers intent on being the windshield are leveraging AI to do more than simply alleviate logistics or speed up the doing of research (the past purpose of research operations) they’re also using AI to expand, or augment, their purpose: in the midst of doing research, they’re drafting product briefs, building lightweight prototypes, writing on-brand copy, and even making incremental product changes.2

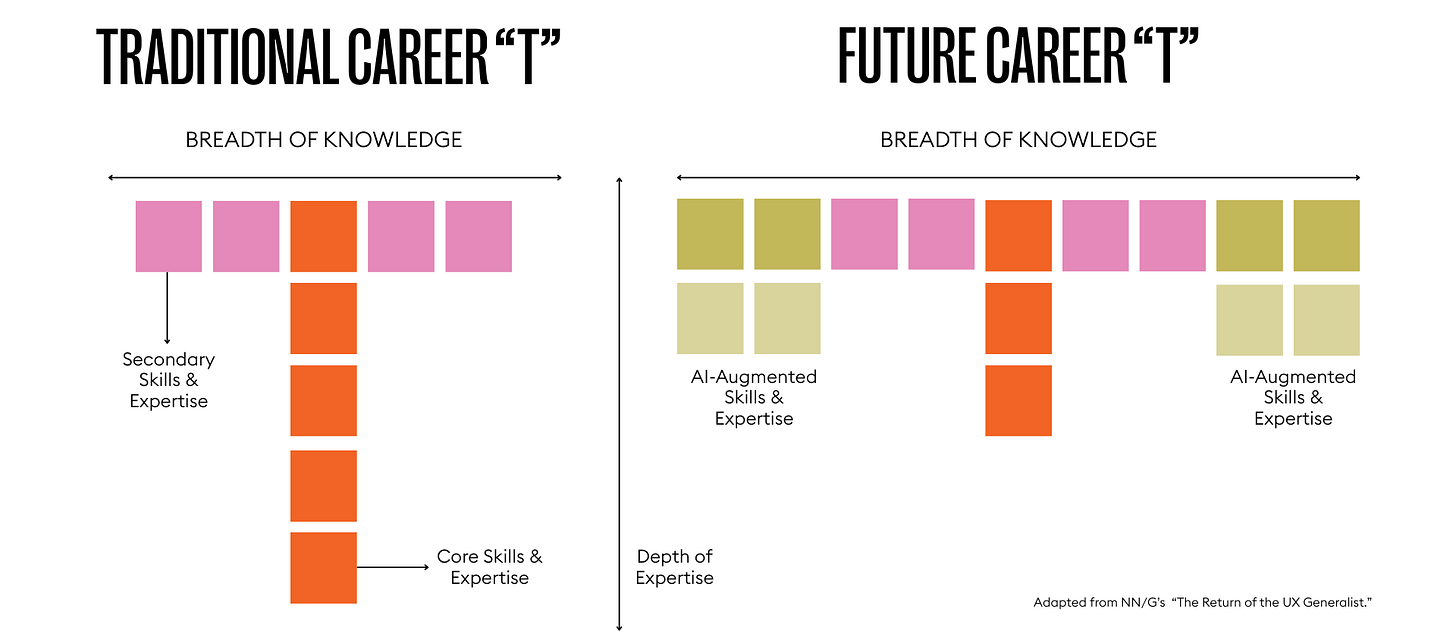

In an article published in March 2025, the NN/Group offered that “AI is broadening the scope of what any individual can accomplish, regardless of their specific expertise.”3 (See Figure 1.0.) The notion of “experience designers” who, with the help of AI, can cover everything from content strategy to service design and research to frontend development is on the rise. AI is prompting entire specialisms to become democratised. (At this point, the idea that research leaders might choose whether research is democratised or not feels almost quaint!)

From Individual Expertise to Shared Systems

It might be an easy assumption that this “expanded-T” trajectory will further isolate disciplines or lead to an extinction event, wiping out entire specialisms.4 If everyone can do everything on their own—or rather, with an LLM or team of skills (the Claude kind), or agents in tow—then why have all these specialisms, and why work together?

In reality, the opposite is true. AI is currently only capable of delivering value (more on this later) when humans use AI to augment their own specialist intelligence and skills. To achieve these augmentative “wins,”5 teams working across the product development lifecycle (PDLC) must collaborate to build and train common-use AI agents, establish templates, and design workflows that enable non-specialists to independently deliver parts of their job with the help of AI. But systems are as much about control as they are about enablement, and it’s the system owner who tends to set the controls. So teams must also collaborate to establish guardrails, set standards, and formulate risk profiles for the parts of their jobs that should not be democratised or handled by, or via, AI.

The word “must” features twice in the above paragraph, which begs the question: Why must we do any of this work? Because democratisation is no longer a choice.

The research profession has been grappling with democratisation for more than half a decade, the implementation of which has usually meant providing resources and support to whoever wanted to do research; an egalitarian approach that rarely delivers return on investment to the organisation. But the accelerative, magnifying effect of AI has made the design of priority-and-risk-based research systems urgent. Never mind poorly facilitated user interviews; it’s no longer science fiction that a program manager or an engineer might deliver an advanced quantitative research study, replace customers with synthetic personas, or launch dozens of AI-moderated interviews overnight. Research teams must set standards and create workflows for when and how AI should, and should not, be used.

It would be compelling to assume that ResearchOps professionals—practitioners in a profession that emerged precisely to handle these systems and governance tasks—are currently consumed with designing well-articulated AI-augmented research operations. Plot twist: that’s not the case.

Every week, I engage with dozens of ResearchOps professionals as the editor-in-chief of this publication and as an advisor and educator, giving me an elevated perspective on what ResearchOps professionals are thinking about—and sometimes what’s on their roadmaps. Earlier this year, I ran a workshop with thirty ResearchOps professionals from across industries to explore what was on their 2026 roadmaps. Attendees collectively shared 170 tasks; remarkably, only twenty-nine were AI-related. That’s just 17 percent.

ResearchOps professionals aren’t innovating or augmenting operating models at speed behind closed doors, bereft of time to share their learnings. Instead, for a variety of systemic reasons, they’re late to the party—the most important party yet—and researchers are taking the lead. That researchers are taking the lead isn’t the issue. The greater concern is that neither researchers nor ResearchOps professionals are paying attention to the systems- or organisation-level design of AI-augmented research, which is problematic (extinction-level problematic) for several reasons.

The Hidden Cost of “I-Me-Mine AI”

There are four clear camps in the AI research space. Those who don’t want to use AI at all are in camp one, but public discourse indicates this group is shrinking. In camp two are those who are interested in AI but are too time-poor, energy-poor, or overwhelmed to take the leap. In camp three are those using AI to speed up or lighten the load of their own work (I call this “I-Me-Mine AI” to play off the title and lyrics of a Beatles song).6 Finally, in camp four is an alarmingly small cohort setting collective standards or leveraging AI at the operating system level.

To go back to Nielsen’s quote from 2023: “It’s not AI that will snatch your job, but the individual leveraging AI to outpace your performance.” Three years later, in an agentic age, I would argue that it’s not AI that will snatch your job, but the team, the discipline, or, more pointedly, the operating system that’s leveraging AI to outpace your performance.

Because UX roles are becoming less specialised, your individual value (the value that might win you a promotion) depends on whether you can do that “expanded-T” set of jobs or “jobs to be done”7 (JTBD) well. However, the past few years have taught too many people that individual value isn’t enough to protect your role. For your job to be secure, your discipline’s organisational value (the executive-level value that keeps your discipline employed) depends on how effectively you and your team can enable others to do parts of your job, all of which hinges on the design and delivery of scalable operating systems. This phenomenon is far from brand-new—it’s been defining research teams over the past half-decade via democratisation—but as mentioned earlier, AI is amplifying and magnifying the dynamics, and the opportunity.

An Operational Imperative

Unlike the generalisation of UX roles, which has largely been prompted by artificial intelligence (pun intended), the recent shift in how corporations operate is perpetuating an evolution in research operations: the JTBD and the role, too.8 “The great fiscal reset” that has characterised the lives of tech workers since 2022 has led to the destruction of many research teams in favour of skeleton-crew research operations functions tasked with democratising research.9 To go back to NN/G’s “expanded-T:” just as UX roles are conflating, in many organisations, the line between research and research operations is blurring, too. Every week, a researcher messages me to let me know they’re now doing research operations, or a ResearchOps professional lets me know they’re working solo—that’s to say, without the partnership of a research leader.

The growth trajectory of research teams is in the process of being flipped, a point that is highly relevant in an AI-augmented, profit-oriented world: where research leaders once built a team of user researchers, then constructed an operations function beneath them, ResearchOps professionals (or research professionals who have absorbed operational responsibility) are now building operations programs focused on democratisation, then making the case for research headcount on the strength of the scaled-up value they’re delivering. In many cases, democratisation is no longer a research leader’s choice—if they’re there to make the choice at all—but an operational imperative. The message from corporations is this: we want you to deliver systems that empower a spartan, generalist workforce, then we’ll invest in specialists where they’re most valuably employed.

This is precisely where ResearchOps should be stepping in—or where researchers now working operationally should focus their efforts. ResearchOps exists explicitly to build and maintain the infrastructure that makes accessing research insights (not just doing research) possible at large, and even super-large scales. In an AI-accelerated research environment, that work hasn’t become less important; it’s become urgent, not only because organised is nicer than disorganised, or orchestrated is more efficient than not, but because this is an unprecedented opportunity for research and ResearchOps professionals to secure a high-power seat at a table that’s desperate for trustworthy knowledge, and desperate to make the most of AI.

From Individual Efficiency to Organisational Impact

A 2025 PwC survey found that 56 percent of CEOs reported their companies were not yet seeing financial returns from AI investments.10 Writing in the Harvard Business Review in February 2026, Prabhakant Sinha, Arun Shastri, and Sally Lorimer concluded: “In most organisations, digital underperformance isn’t a failure of change management, but a mismatch between new ways of working and old organisational designs.”11 Though few ResearchOps professionals are delivering AI-augmented workflows, whether new or based on existing ones, there are pockets (a vague designation, I’ll admit; the number is currently unquantifiable) of researchers delivering AI-augmented research operations. An excellent, publicised example comes from the DoorDash research team. Their articles “Researchers Are Rewriting the Playbook with AI” and “How Cursor & Claude Code Are Changing Research at DoorDash and Deliveroo” are essential reading, in which they share how the company is systematically building AI-augmented research operations from the ground up. Indeed, the articles give the impression that the entire organisation is on the same trajectory, which tends to help with innovation.

DoorDash’s forward-thinking approach is inspiring because AI-in-research content is predominantly self-focused: I-Me-Mine AI (researchers using AI to augment their personal efficiency) is the dominant “operating model,” with little, if any, conversation dedicated to continuity across individuals or research teams, let alone the wider organisation. This is problematic because corporations don’t invest in or maintain teams simply because the individuals working in them are more efficient or happy; an unfortunate fact. If the research profession’s I-Me-Mine approach to AI doesn’t change, it will be a case of history repeating itself.

When ResearchOps first became widely recognised as a profession, circa 2018, research leaders were making a strategic misstep: typically, people were hired into ResearchOps roles to help make research (in effect, researchers) more effective and efficient. Rather than being tasked with delivering value to the organisation, their work tended to focus on, for instance, unburdening researchers of the task of participant recruitment by assuming the duty altogether, building a library to enable secondary research, or handling the undesirable work of procurement and vendor management. It’s not to say that no value was delivered, but did the corporation earn more money because their researchers were better prepared, enjoyed their work more, and were more focused on delivering research? In most cases, the answer is no.

The Clock is Ticking

To return to the Harvard Business Review article, consider this observation related to commercial AI efforts: “Repeatedly, executives tell us that digital initiatives fall short of expectations: improving execution but not strategy, increasing efficiency but without freeing capacity for higher-value work, showing strong adoption but limited business impact, and creating disconnected solutions that don’t fit with existing workflows.”

From the corporate perspective, the value of AI doesn’t lie in what individuals can do to augment their own workflows, for themselves—useful for building initial ideas and confidence, but a narcissistic and limiting approach if it becomes the status quo. I-Me-Mine AI might enable an individual researcher to save hours on analysis and synthesis, or reclaim time for more strategic thinking. But unless AI experimentation and adoption deliver tangible, cross-functional efficiencies to the organisation (an operations-level boon that’s obvious to a CEO), the research profession—and especially the ResearchOps profession—will have missed the moment. And what a moment it is.

AI is, without doubt, going to revolutionise how research is conducted and consumed across organisations, with or without researchers or ResearchOps in charge. One needn’t be Nostradamus to make that prediction! AI is powerful and empowering enough that, if research professionals don’t get ahead of building scaled-up research systems and purposefully setting standards for how they and others in their organisation do, create, or consume AI-enabled research, someone else will—perhaps with less concern for methodology, rigour, and ethics. This is the immediate opportunity for research and ResearchOps professionals, and now is the time because the canvas won’t remain blank forever, habits and preferences will become embedded (and oh-so-much harder to change), and the opportunity to naturally lead the design of organisation-wide systems is finite. The clock is ticking. Some might say it’s a time bomb. (At this point, I must reference another musical classic, Rancid’s “Time Bomb.”)

You Already Have the Required Skills

Researchers paired with ResearchOps are better placed than most to deliver AI-augmented systems that are impactful organisation-wide and prized at the executive level. To do this, you’ll need to develop AI literacy, for sure (see Anthropic Academy), you’ll also need to reimagine the purpose of your role—it’s being reshaped around you anyway—and make new use of your specialist skills.

The following list will sound so familiar it might even seem trite, but that’s the point. To seize the moment, you must hone your inner:

Service or system designer. Take a step back and study the full ecosystem; don’t dive in with a limited view.

Researcher. Learn from individual researchers or people who are doing or consuming research to identify good and bad practices. How are people approaching AI? What’s worth repeating? What’s worth designing out?

Strategist. Pick the most value-adding and low-risk places to implement AI. Where does AI augment human intelligence and skill? Where does speed threaten rigour? Where is rigour most important?

Change manager. Gather the makers and innovators in your organisation into a working group or council to encourage collaboration and the development of shared systems and rules. Governance is always better when it’s developed by a group.

Communicator. Start talking about what’s working and what isn’t working. Find ways of sharing your learnings to bring others on the journey, establish the value of your work, and help others build on your learnings.

Above all, you must switch the conversation from “Where should I use AI in my workflow?” and “Which AI tools should I use?” to “Where should we use AI in our workflow?” and “Which AI tools should we use?” Through action, language, and politics, you must design the culture in which your AI-augmented research operations will live—and the future of research in your organisation.

The Conversation Is Yours to Lead

The breakdown of siloes and expansion of roles across UX signifies that shared technologies, standards, and cross-functional collaboration are more essential (and powerful) than ever, but someone has to lead the conversation. It might have been assumed that ResearchOps would lead it, but researchers are centre stage right now.

It’s excellent news that the conversation is happening, no matter the altitude and who’s leading it. But to summon Nielsen’s windshield-bug analogy one last time, when it comes to AI-augmented research, without a significant shift, the ResearchOps professions are collectively in danger of becoming the bug, no matter how good you are at prompt engineering. More importantly, the research profession as a whole is in danger of being passed by the power of the moment: a real chance to get ahead of everyone else in setting system- and operating-level standards, and the future agenda for research.

“I Me Mine” was the final new song recorded by The Beatles before their 1970 breakup. Written by George Harrison, it’s a commentary on the human ego and the tendency for humans, and perhaps AI (time will tell), to look after oneself. But change often requires just one person to think of the whole; to bring people together and design a mutually beneficial system.

Sponsor and Credits

The ResearchOps Review is made possible thanks to Rally UXR—scale research operations with Rally’s robust user research CRM, automated recruitment, and deep integrations into your existing research tech stack. Join the future of Research Operations. Your peers are already there.

Edited by Kate Towsey and Katel LeDu.

Nielsen, Jakob. "UX Needs a Sense of Urgency About AI." Jakob Nielsen on UX. June 15, 2023. https://jakobnielsenphd.substack.com/p/ux-needs-a-sense-of-urgency-about

Ho, Elsa. "How Cursor & Claude Code Are Changing Research At DoorDash and Deliveroo." Medium. February 13, 2026. https://medium.com/design-doordash/how-cursor-claude-code-are-changing-research-at-doordash-and-deliveroo-c2b534018af5.

Gibbons, Sarah and Sunwall, Evan. "The Return of the UX Generalis." NN/G. March 28, 2025. https://www.nngroup.com/articles/return-ux-generalist/.

The dinosaurs were among the big five extinction events of the last 500 million years.

It’s too early to tell what the explicit wins and losses of AI-augmentation are.

“I Me Mine” is the final new song recorded by The Beatles before their 1970 breakup. Listen to it on YouTube:

JTBD is “a lens through which you can observe markets, customers, needs, competitors, and customer segments differently, and by doing so, make innovation far more predictable and profitable.” (See “What Is Jobs-to-be-Done?”)

I wrote about this phenomenon in “Why the Distributed Growth Model Is Failing Research Teams—and What to Build Instead,” a recent article for The ResearchOps Review.

Towsey, Kate. “Why the Distributed Growth Model Is Failing Research Teams—And What to Build Instead.” The ResearchOps Review, February 28, 2026. https://www.theresearchopsreview.com/p/why-the-distributed-growth-model.

PwC, Global CEO Survey, 2025; cited Sinha, Prabhakant, Arun Shastri, and Sally Lorimer. “Why Your Digital Investments Aren’t Creating Value.” Harvard Business Review, February 17, 2026. https://hbr.org/2026/02/why-your-digital-investments-arent-creating-value.

Sinha, Prabhakant, Shastri, Arun, and Lorimer, Sally. “Why Your Digital Investments Aren’T Creating Value.” Harvard Business Review, February 17, 2026. https://hbr.org/2026/02/why-your-digital-investments-arent-creating-value.

We desperately need more 'ResearchOps makers' stepping up to create scalable knowledge architectures for business units outside UX, much like Salesforce Research has successfully demonstrated. Achieving this practically overnight requires aiming for enterprise-wide research repos and developing the chops to confidently build fully vector-based semantic prompts. I am thrilled to say this evolution is already happening with me as we fully embrace this exciting new wave of agentic ResearchOps. That said, makers are about self experimentation... so it's a both/and when it comes to me/I and the Other. This is easier to do as a consultant to small orgs than as an enterprise associate since large orgs are slow to adopt agentic processes.

Holy S***! Yes, this is exactly the world we are living and breathing right now as ReOps leaders, and it's a huge opportunity. Thank you for so clearly articulating this - multiple ways we are moving and grooving internally clock to this, around positioning ourselves to lead the way in building these durable systems in a sea of ad hoc Replits. The tidal wave of Claude Code is making this work even more imperative. Time will tell if the positioning will work. Appreciate your POV, always - keep going!